Meta is tightening its safety controls for young users in Malaysia, and the timing is not accidental. As the country moves closer to stricter social media rules for children and teenagers, the company is putting more attention on how younger users interact with Facebook, Instagram, and Messenger. Through its Teen Accounts system, Meta says it is building in stronger protections by default rather than expecting teens or parents to configure everything manually.

That matters because the broader conversation in Malaysia is clearly shifting. There is growing pressure to make social media safer for minors, especially as policymakers push the idea that children under 16 should not be operating fully independent personal accounts. Under current recommendations, those younger users are expected to rely only on accounts managed by parents. Against that backdrop, Meta's latest safeguards look like both a safety update and a sign that major platforms are preparing for a tougher regulatory environment.

Teen Accounts Are Now the Starting Point, Not an Optional Setting

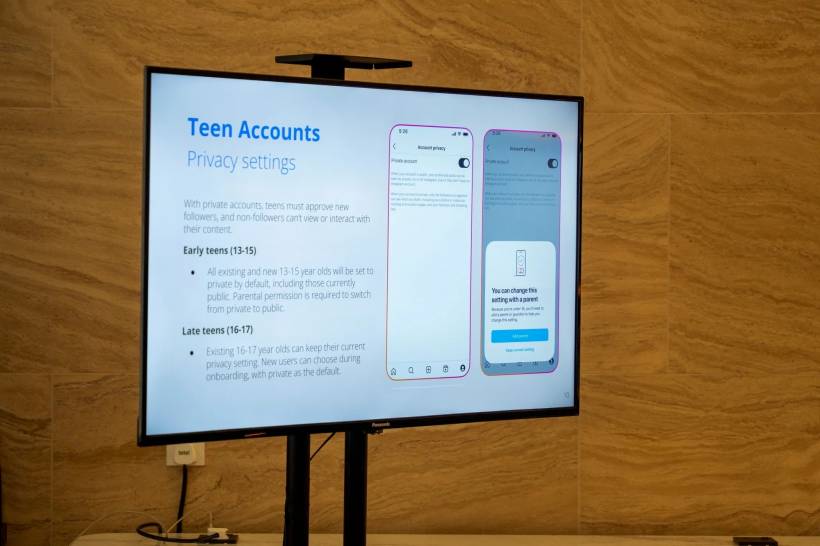

One of the most important parts of Meta's approach is that Teen Accounts are not presented as an extra feature that users need to discover for themselves. Instead, users between the ages of 13 and 17 are automatically placed into these accounts from the start. That means stronger privacy protections are built in by default, with even tighter rules applied to those in the younger teen bracket.

For users aged 13 to 15, Meta says privacy changes generally require parental approval. In other words, younger teens cannot simply turn off key protections on their own whenever they want. That creates a more controlled environment, especially at an age when many users may not fully understand the risks tied to visibility, unwanted contact, or oversharing online.

Meta also says that most users in this younger group keep the default settings in place. That suggests the company's safer-by-default approach is having an effect, at least in terms of limiting how many teens weaken their own protections.

Private by Default and Harder for Strangers to Reach

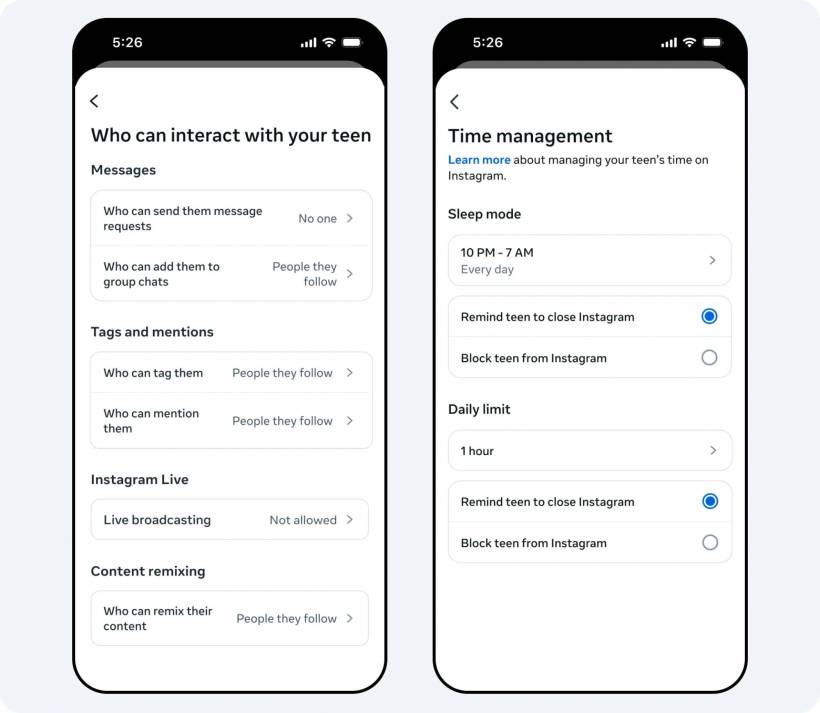

A major concern with teen social media use has always been who can contact them and how easily unknown people can enter their online space. Meta's answer is to lock things down more aggressively from the beginning.

Teen Accounts are automatically set to private, which immediately narrows who can see a teen's content or attempt to interact with them. Messaging is also more tightly controlled. Teen users can only receive direct messages from people they already follow or are connected with, while outreach from strangers is blocked.

That might sound basic, but it addresses one of the biggest real-world risks younger users face online. Many harmful interactions do not begin with public posts. They begin quietly through private messages, fake profiles, or seemingly harmless outreach from people the teen does not actually know. Limiting those contact channels by default makes a real difference.

Meta Is Also Trying to Curb Excessive Use

The company is not focusing only on privacy and stranger contact. It is also trying to shape how long teenagers stay on its apps and when they use them.

Teen users will receive reminders after reaching one hour of daily app use, nudging them to close the app and step away. On top of that, Meta has added a built-in sleep mode that mutes notifications between 10pm and 7am. The idea is to cut down on late-night scrolling, reduce interruptions during rest hours, and encourage healthier digital habits.

These kinds of controls show how youth safety online is no longer being framed only as a content issue. It is increasingly being treated as a wellbeing issue too. Excessive screen time, constant notifications, and late-night usage all affect how teens experience social media, even when the content itself is not overtly harmful.

Meta has also restricted Teen Accounts from creating live broadcasts or accessing livestreams that have already been flagged as inappropriate for their age group. That adds another layer of friction around more exposed or less predictable forms of online interaction.

Content Filters and Built-In Warnings Add Another Layer

Another part of the Teen Accounts setup involves filtering the kind of content younger users are exposed to. Meta says it automatically limits or removes material containing terms, themes, or language that are considered unsuitable for teens.

That filtering is paired with other built-in safety cues designed to help younger users think a little more carefully before engaging with other accounts. For example, Meta may show contextual information such as when an account joined the platform, along with tips that help teens spot suspicious behaviour or possible scam attempts.

There is also a profanity filter switched on by default, aimed at blocking offensive language and symbols. That may not solve every problem, but it reflects a broader strategy: reduce risk in multiple smaller ways rather than relying on one giant safety switch.

Family Centre Gives Parents More Direct Oversight

Meta is also leaning heavily on parental involvement through its Family Centre hub, which acts as a central place for monitoring and account management tools. Parents who want to supervise or approve changes need to link their own accounts as parent profiles, after which they can take a more active role in shaping how the teen account works.

Through Family Centre, parents can approve privacy-related changes, manage time limits, adjust restrictions, and apply stronger controls where needed. That makes the system more practical for families who want oversight but do not necessarily want to constantly inspect everything manually.

This is one of the more important parts of the whole framework. Digital safety tools often fail not because the tools do not exist, but because they are scattered, confusing, or too easy to ignore. By putting those functions into a single hub, Meta is clearly trying to make parental supervision easier to use in real life.

Meta Says It Is Using Multiple Methods to Spot Underage Users

Age verification remains one of the hardest problems for social media platforms. Anyone can type in a false birth date during sign-up, so companies increasingly have to rely on a mix of signals rather than simple self-reporting.

Meta says it uses a layered detection system that includes registration checks, reports from other users, manual reviews, and AI tools that look at account behaviour. If an account appears to belong to a teenager, Meta may reclassify it into a Teen Account even if it was not originally set up that way. If it suspects the account belongs to a child under 13, the account may be removed altogether.

Where necessary, verification may also involve asking for identification documents or other forms of proof of age. This reflects the reality that age enforcement is becoming a bigger part of platform governance, especially in countries that are starting to apply more pressure around youth access.

Teen Advertising Is Being Kept More Limited

Meta also reiterated that users under 18 are not supposed to be targeted with ads based on interest profiling or personal data in the same way adults are. Instead, teen ad delivery is more broadly restricted to factors like age and general location.

That is an important distinction because it reduces how deeply advertisers can personalise what younger users see. It also suggests that Meta is trying to align its treatment of teens more closely with the way age-sensitive content is handled in other industries, where suitability matters as much as engagement.

Malaysia's Regulatory Direction Is Clearly Part of the Story

All of this is happening as Malaysia moves toward a more restrictive stance on youth social media use. That makes Meta's update feel like more than a routine product announcement. It is also part of a larger shift in how platforms are expected to behave when children and teenagers are involved.

Meta has said that online safety cannot be handled by platforms alone and that regulators, parents, and companies all need to work together. In Malaysia, the company says it is continuing discussions with the Malaysian Communications and Multimedia Commission, while also looking at age-verification approaches being used in other countries.

That framing is important because it shows how the debate is evolving. The old model of simply giving users tools and hoping they use them is no longer enough. Governments increasingly want stronger guardrails in place by default, especially for minors.

Final Thoughts

Meta's updated Teen Account protections in Malaysia show where the industry is heading. Private-by-default settings, restricted messaging, screen-time prompts, sleep mode, content filtering, parental controls, and stricter age checks all point to the same broader idea: social platforms are under growing pressure to make youth safety automatic rather than optional.

For Malaysia, this comes at a time when under-16 access to social media is becoming a much bigger policy issue. That makes Meta's move feel both timely and strategic. Whether these safeguards go far enough is a separate question, but the direction is clear. Social media companies are being pushed to design younger users' experiences around protection first, not just engagement.

Comments