This question keeps popping up because the ground under tech has shifted. AI can now generate working code fast enough that it's tempting to think learning to code is like learning to use a map after everyone got GPS.

In a recent piece on Medium's Data Science Collective, Marina Wyss argues the reality is more uncomfortable and more interesting: AI is changing what "coding" looks like, but it hasn't made coding literacy optional.

Reference (Marina Wyss' article):

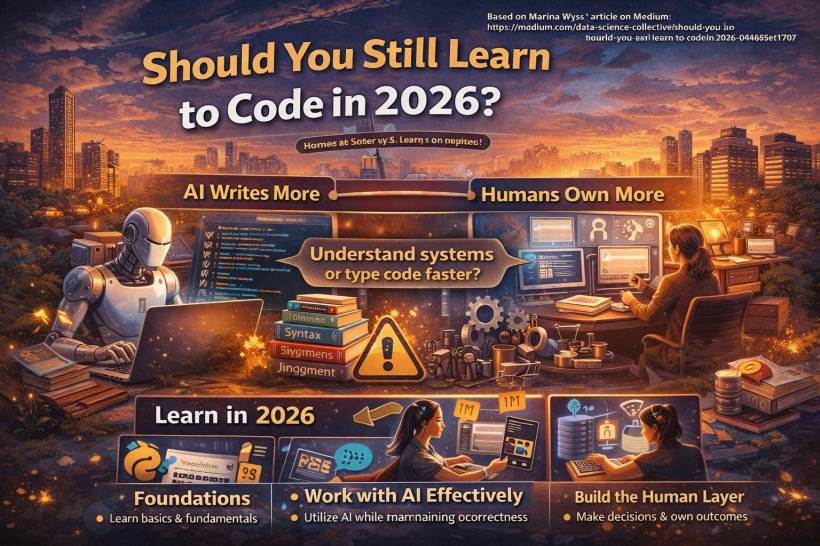

Marina Wyss' Core Point: AI Writes More, Humans Own More

Marina describes a modern workflow where AI produces a huge portion of the code that ships, especially when used well with clear specs and multi-step tooling. The "typing" part shrinks. The "deciding, verifying, and being accountable" part grows.

So the question isn't really "Will I be replaced by AI if I learn to code?" It's closer to: "If AI can produce code, what skills do I need to make sure it's the right code, safely shipped?"

What the Job Numbers Say (And Why the Headline Is Misleading)

The scary-sounding part is real: the job category "computer programmers" is projected to decline, and the BLS explicitly forecasts a 6% drop from 2024 to 2034.

But "software developers" is a different story. BLS projects 15% growth for software developers, QA analysts, and testers from 2024 to 2034.

Marina's framing here is important: "programmer" roles traditionally leaned more toward translating specs into syntax. "developer/engineer" roles include design decisions, trade-offs, reliability, and coordination. AI attacks the translation work first, not the judgment work.

The AI Adoption Twist: Everyone's Using It, But Trust Is Dropping

One reason this feels confusing is that AI tools are everywhere in dev workflows now. Stack Overflow's 2025 Developer Survey shows AI is mainstream, but it also highlights an emotional split: usage is up, while confidence and sentiment are wobblier than people expected.

The practical consequence is something Marina emphasizes: AI can get you close quickly, but "almost right" can be the most expensive kind of wrong, because it looks believable until production proves otherwise.

The Part AI Didn't Remove: Before and After the Code

Marina breaks modern software work into three phases:

• During code: writing and assembling implementation and tests

• After code: deploying, monitoring, handling incidents, compliance, and explaining outcomes to stakeholders

AI compresses the "during" phase dramatically. But it doesn't erase the "before" and "after." If anything, those phases become more important, because you can now build faster than you can think, which means mistakes scale faster too.

And when something breaks, accountability doesn't go to the tool. It goes to the humans who shipped it. That's the part people conveniently forget when they say "AI will code everything."

So… Should You Learn to Code?

Marina's answer, in spirit, is yes, but with the right goal.

Learn to code so you can understand systems, reason about failure, debug issues, review AI output, and make trade-offs. Don't learn to code because you think your future value is typing curly braces faster than a model.

Coding in 2026 is less about producing code from scratch and more about directing, verifying, and owning outcomes.

A Practical "How to Learn in 2026" Path (The Way Marina Frames It)

Marina's learning approach can be summarized as a progression:

1) Foundations first

Pick one language and get genuinely comfortable. Focus on fundamentals like data flow, APIs, authentication basics, databases, and testing. Use AI to explain concepts, but don't let it replace the struggle that builds understanding.

2) Learn to work with AI without losing correctness

Practice giving constraints, defining "done," generating tests, and reviewing results critically. Small, reviewable changes beat giant AI-generated dumps.

3) Build the "human layer"

This is the part that hiring and real production work cares about: writing clear specs, making trade-offs (cost vs performance, speed vs reliability), and developing an incident-response mindset.

Final Thoughts

If you're learning to code in 2026, you're not late. You're just learning in a different era, where the most valuable skill isn't typing code, it's understanding what the code should do, proving that it does it, and being able to explain and defend it when things go sideways.

AI can generate software. But "owning software" is still a human job.

Comments