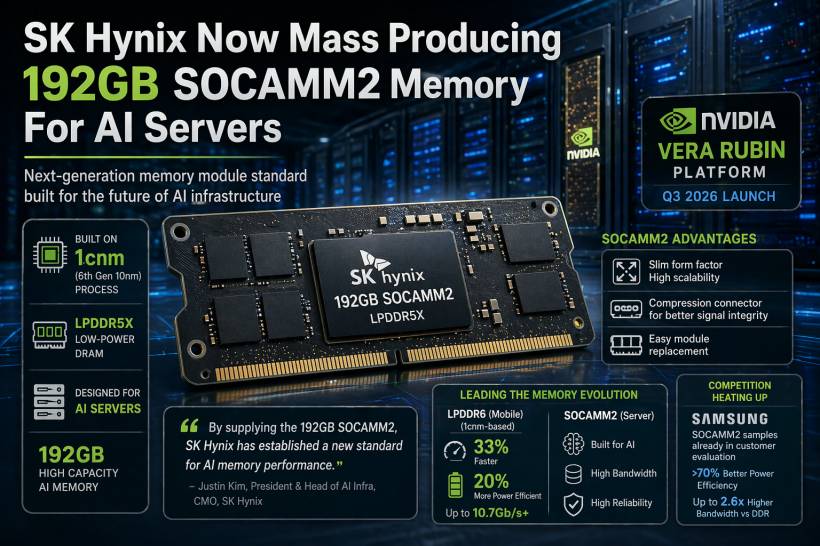

The race to power next-generation AI infrastructure is heating up, and SK Hynix has just made a significant move. The South Korean memory giant has announced that it has begun mass production of its new 192GB SOCAMM2 memory modules, marking another step forward in specialised hardware designed specifically for AI workloads.

This isn't just a routine upgrade. SK Hynix is positioning SOCAMM2 as a next-generation memory standard built for the demands of modern AI servers, combining high capacity, efficiency, and scalability into a compact form factor.

What Exactly Is SOCAMM2?

SOCAMM2, short for Small Outline Compression Attached Memory Module 2, is not your typical server memory. It is designed with AI infrastructure in mind, using LPDDR-based architecture rather than traditional DDR memory commonly found in servers.

The module is built on SK Hynix's 1cnm process, which represents its sixth-generation 10nm-class technology, and uses LPDDR5X low-power DRAM. That combination allows it to deliver strong performance while keeping power consumption under control — a critical factor in large-scale data centres where efficiency directly impacts operating costs.

One of the more interesting design aspects is its compression connector, which helps improve signal integrity while also making the module easier to replace. Add to that a slimmer physical design and improved scalability, and SOCAMM2 starts to look like a memory format built for flexibility as much as raw performance.

Designed for NVIDIA's Next AI Platform

A key detail here is that this new memory is being developed with a specific future platform in mind. SOCAMM2 is expected to be used with NVIDIA's upcoming Vera Rubin architecture, which is scheduled for launch in the third quarter of 2026 and is likely to succeed the current Blackwell generation.

That alignment is important because it shows how tightly integrated hardware development has become in the AI space. Memory is no longer just a supporting component. It is being designed alongside GPUs and AI accelerators to ensure the entire system can handle increasingly complex workloads efficiently.

Setting a New Benchmark for AI Memory

SK Hynix is clearly confident about what this product represents. The company describes the 192GB SOCAMM2 as establishing a new benchmark for AI memory performance, reinforcing its ambition to be a leading provider of memory solutions for AI infrastructure.

This is part of a broader strategy where memory manufacturers are no longer competing only on capacity or speed in isolation. Instead, they are focusing on how well their solutions integrate into AI ecosystems, where bandwidth, latency, power efficiency, and scalability all need to be balanced carefully.

Building on Earlier Advances in LPDDR Technology

This announcement also builds on earlier progress from SK Hynix in advanced memory development. Earlier this year, the company introduced what it described as the world's first LPDDR6 RAM chip based on the same 1cnm-class process.

That LPDDR6 memory reportedly offers around 33% better performance and 20% improved power efficiency compared to LPDDR5X, with speeds reaching 10.7Gb/s and beyond. While LPDDR6 is targeted more toward mobile devices like smartphones, tablets, and handheld gaming PCs, it reflects the same underlying push toward faster and more efficient memory across all segments.

Taken together, these developments show a consistent direction: pushing both server-grade and mobile memory toward higher efficiency without sacrificing performance.

Competition Is Already Heating Up

SK Hynix is not alone in this space. Samsung is also moving quickly into the SOCAMM2 segment, and competition between the two South Korean giants is expected to intensify.

Samsung has already begun providing SOCAMM2 samples to customers and claims its own implementation can deliver over 70% better power efficiency and up to 2.6 times higher bandwidth compared to traditional DDR-based server memory.

That kind of competition is likely to accelerate innovation in AI memory even further, as both companies try to secure partnerships with major AI hardware providers and data centre operators.

Final Thoughts

The launch of 192GB SOCAMM2 memory modules highlights how critical memory technology has become in the AI era. As AI models grow larger and more complex, the need for faster, more efficient, and more scalable memory solutions becomes impossible to ignore.

SK Hynix's move into mass production suggests that the industry is not just experimenting with new standards anymore — it is preparing to deploy them at scale. With platforms like NVIDIA's next-generation architecture on the horizon and competitors like Samsung pushing similar innovations, the evolution of AI memory is clearly entering a much more serious phase.

Comments